|

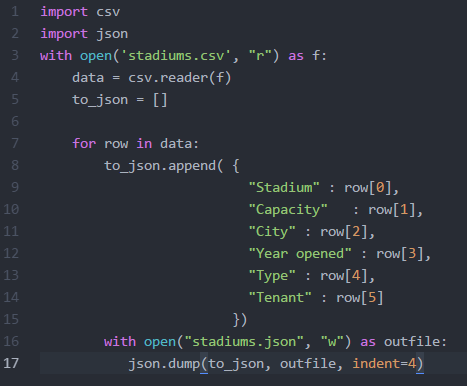

At the beginning of the week, I met with Julie to discuss and provide feedback on the interface she has been designing for our web app. We were able to agree on the color scheme, brainstormed ways to make the site more user friendly, and realized additional pages that will need to be added. My priority for this week was to get back to scraping data from our other sources. I wanted to get the smaller sets completed first, so I began researching sources that held all stadiums in the US. There were a couple of sources to choose from, but Wikipedia had the simplest structure for web scraping. They have a page that list every stadium, including: capacity, city, state, year opened, type, and tenant. Because the data needed was within one table, I was able to use Microsoft Excels PowerQuery plugin. It was really simple to use. All I needed to do was provide the url to the site that contained the table, and then the application would automatically parse the data and let you choose which section of the page you would like to scrape. Since I decided to keep everything in json format, I wrote a script to parse the csv file and convert it to json. Since the source did not provide the address for the stadiums, I was interested in finding out whether or not Google's Geocoder will be able to handle requests when provided the name of the stadium. According to their documentation, it states that an address must be entered. I went ahead and tested it with one stadium name and I actually received a successful response with the accurate location data. Since that worked, I made a few adjustments to my script to include the requests library so I can make the API calls and obtain the responses. However, this did not provide location data for every stadium. There are some that list an empty list with the status of "ZERO_RESULTS". I will revisit the list to see how may stadiums are missing location data to see if it won't be too time consuming to enter the data manually.

I started researching the different real estate site that are out and many of them do not allow web scraping of their data, and their APIs are very restrictive, but they do allow 1000 calls per day. Trulia has an API, but they only return statistics in cities, counties, states, etc. I will continue to research other real-estate application and hope that I find more open data. If I do not, I can sign-up for a Trulia account and start making the 1000 calls per day.

0 Comments

Leave a Reply. |

CategoriesArchives

May 2016

|

RSS Feed

RSS Feed